Every day, millions of customers contact Amazon about problems, a package that never arrived, a charge they don't recognize, a device that stopped working. The people who help them are called Customer Service Associates, or CSAs. My team designed and owned the UX platform those associates work on, the Amazon Customer Care Center (AC3). When Amazon decided to invest in AI to make that work faster and more consistent, my team was the natural owner. That framework powers every business unit at Amazon CS: retail, devices, digital, shipping, and more. When leadership decided to invest in AI, my team was the natural owner, we had the relationships, the research, and the platform to make it real.

A faster tool. But still too much time lost in every contact.

Even with AC3, too much of every contact was still manual work. Associates were stopping mid-conversation to look up policies. Asking customers questions Amazon's own data could already answer. Navigating step by step to resolutions the system could already predict.

I knew this not from dashboards — but from being there.

Two approaches. Both I had to build.

I had been running regular site visits throughout my time at Amazon — flying to customer service sites across three continents to watch associates handle real contacts. Not recordings. Not metrics. Being in the room, watching what actually happened when a customer called in frustrated and a timer was running.

What you see in the room doesn't show up in a dashboard. An associate quietly opening a second browser tab mid-conversation. The micro-pause before they respond — reading, not thinking. The apology before a hold they already know is coming. That's the signal.

I secured budget for regular travel and set formal goals around site visits — making staying connected to associates a tracked design leadership commitment, not something that happened when schedules allowed.

Site visits only go so far — travel is expensive, scheduling is hard, and you're always limited to one site at a time. So I worked with my operational peers to stand up virtual side-by-sides: a program that let me and my team observe associates taking live contacts from anywhere in the world, with the same proximity as being in the room.

Between contacts, we'd talk to the associates directly. What slowed you down just now? What did you already know before you looked it up? What questions do you dread? That conversation — unprompted, between real contacts — is where the real problems surfaced.

This required partnership — getting operational leaders to allow live observation of contacts, setting up the technical infrastructure, and building trust with site managers globally. I owned that relationship. Without it, this kind of research doesn't happen.

Why my team was the right team for this.

My team's position was unusual. We owned the UX framework, the shared design layer and component system that every Amazon CS business unit builds on. If a designer at any CS vertical needed to ship a new feature, they used our patterns. That gave us something most product teams don't have: a clear view across all of them. That gave us something most product teams don't have: a clear view across all of them.

Designers on my team were embedded in retail, devices, digital, shipping, and Amazon Business, close enough to see the real problems their teams were facing. That network gave us a direct path to gather requirements, test early directions, and get signal from business leaders before we'd over-invested in any one approach. When themes emerged from our ongoing site visits, watching associates handle contacts, interviewing customers, we had the context to understand what we were actually seeing and the relationships to act on it quickly. My science counterpart was a close partner throughout. We watched prototype tests together, connected what we observed to what the models needed, and pushed the direction forward together. We set the vision. They helped us make it real.

The AI had to earn trust before it could ask for it.

Every initiative here lived in a high-stakes context. A wrong AI suggestion could mean the wrong resolution, the wrong policy applied, or a customer commitment Amazon couldn't honor. The easy path is to put a chat interface on everything and call it AI. But a chatbot is just a hammer, and not every problem is a nail. We took a different approach. The associate tool already had a structure associates trusted: every contact was identified, categorised, and resolved through a consistent set of steps and screens. Rather than bolt AI onto the side as a separate chat window, I directed the team to surface AI intelligence through those same familiar patterns. The AI makes a suggestion with its supporting evidence visible. The associate reviews it and decides. Nothing is hidden, nothing is forced, and the manual path is always one click away.

Four design initiatives. Each solving a real problem. All of them working together.

Each of these came from something real we observed, not a product roadmap looking for AI use cases. And because my team owned the framework, each one was built to be used by any team across any business unit, not just the team that asked for it first. They also work together: an associate using Predictive Resolution is better served if they already trust CS Helper. Each layer of AI capability made the next one easier to accept.

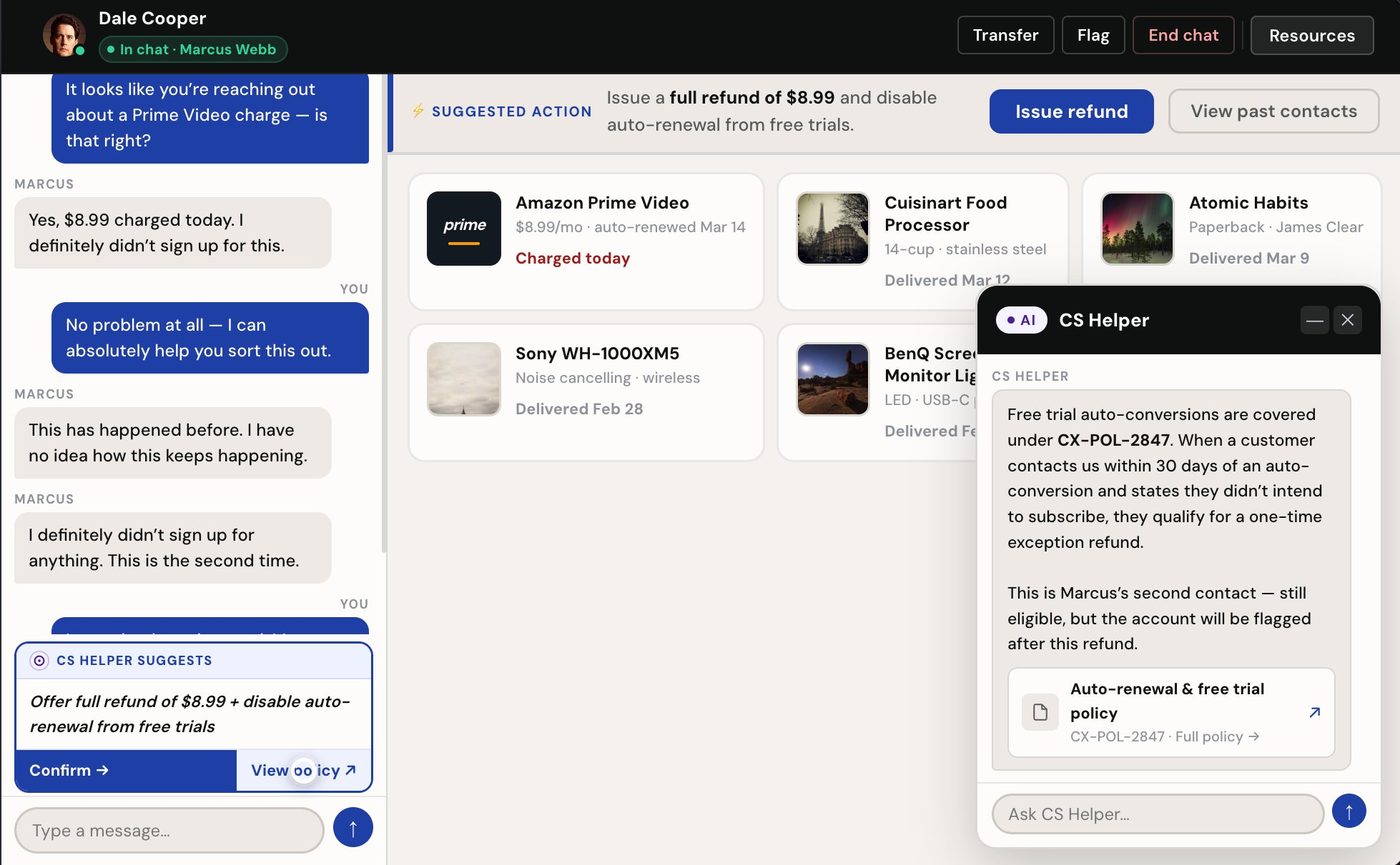

Research surfaced two problems happening simultaneously. Associates were leaving contacts mid-conversation to search documentation, breaking the human moment at exactly the wrong time. And because different associates interpreted policy differently, customers were getting inconsistent answers depending on who picked up.

We built a searchable policy repository into the AC3 workspace so associates could find policies without leaving the tool. The hypothesis: if the information is right there, associates will use it consistently.

We rebuilt CS Helper as a floating AI chat panel alongside the match cards. Associates could ask questions in plain language and get answers specific to the contact in front of them.

CS Helper became a dedicated panel — always present alongside the contact, never a separate tool to open. It proactively surfaced policy answers cited to source. Associates got the answer without searching for it.

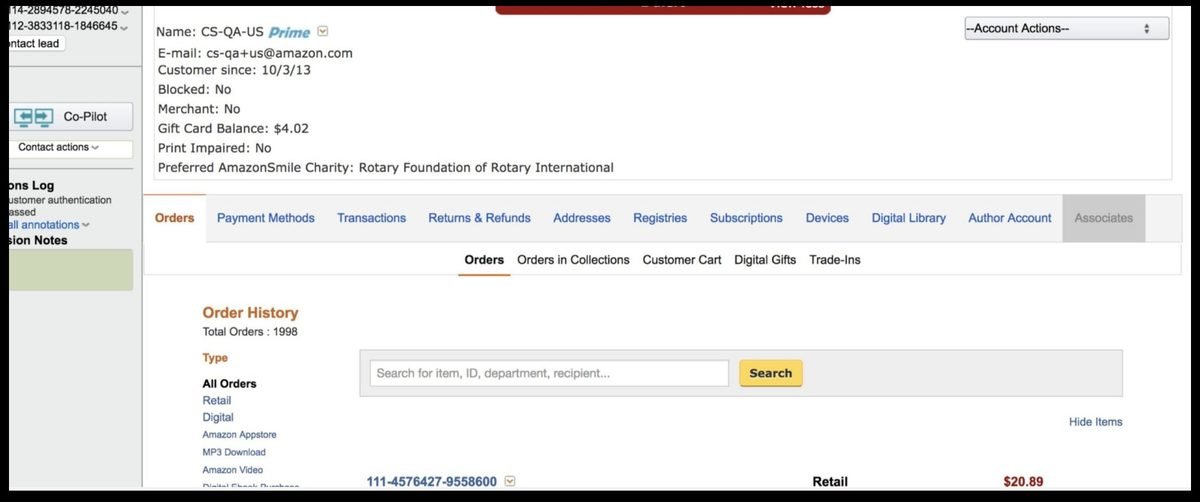

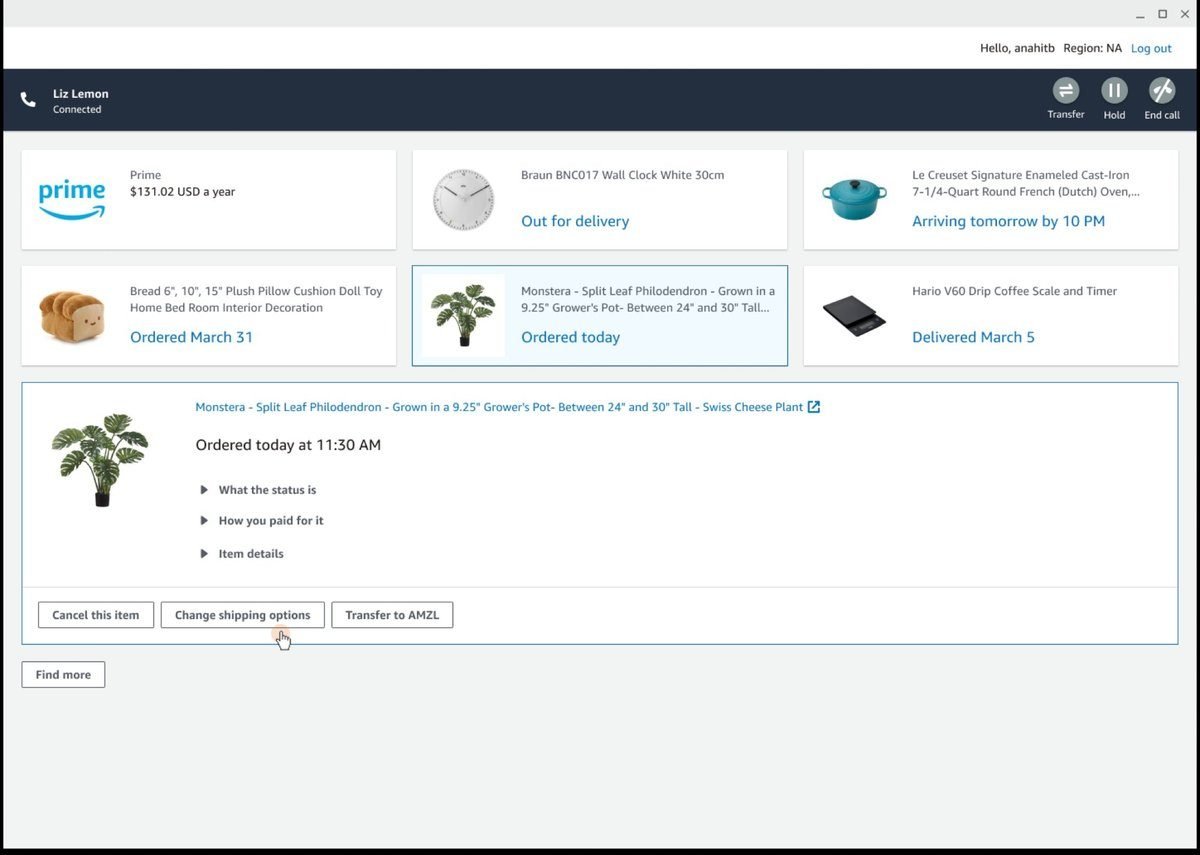

The previous tool was a full case management system, a dense, data-heavy interface where associates had to navigate through complete contact histories, transcripts, and customer records before they could begin helping. When contacts had long histories, associates either spent significant time reading through everything, or skipped it entirely and started from scratch. Either way, the customer paid for it.

This was the baseline. Before AC3, associates handled contacts through a full case management system — tabs of raw data, full transcripts, and customer records that had to be read and interpreted before help could begin.

We built an AI-generated summary into the contact workspace as a collapsible drawer. The summary was right there. But prototype testing revealed we had put it in the wrong place.

Before the associate accepts, they see who is calling and the full AI-generated summary of context from prior contacts. No suggested action, just what they need to know.

After accepting, the summary strip remains at the top above the match cards, always visible, always scannable.

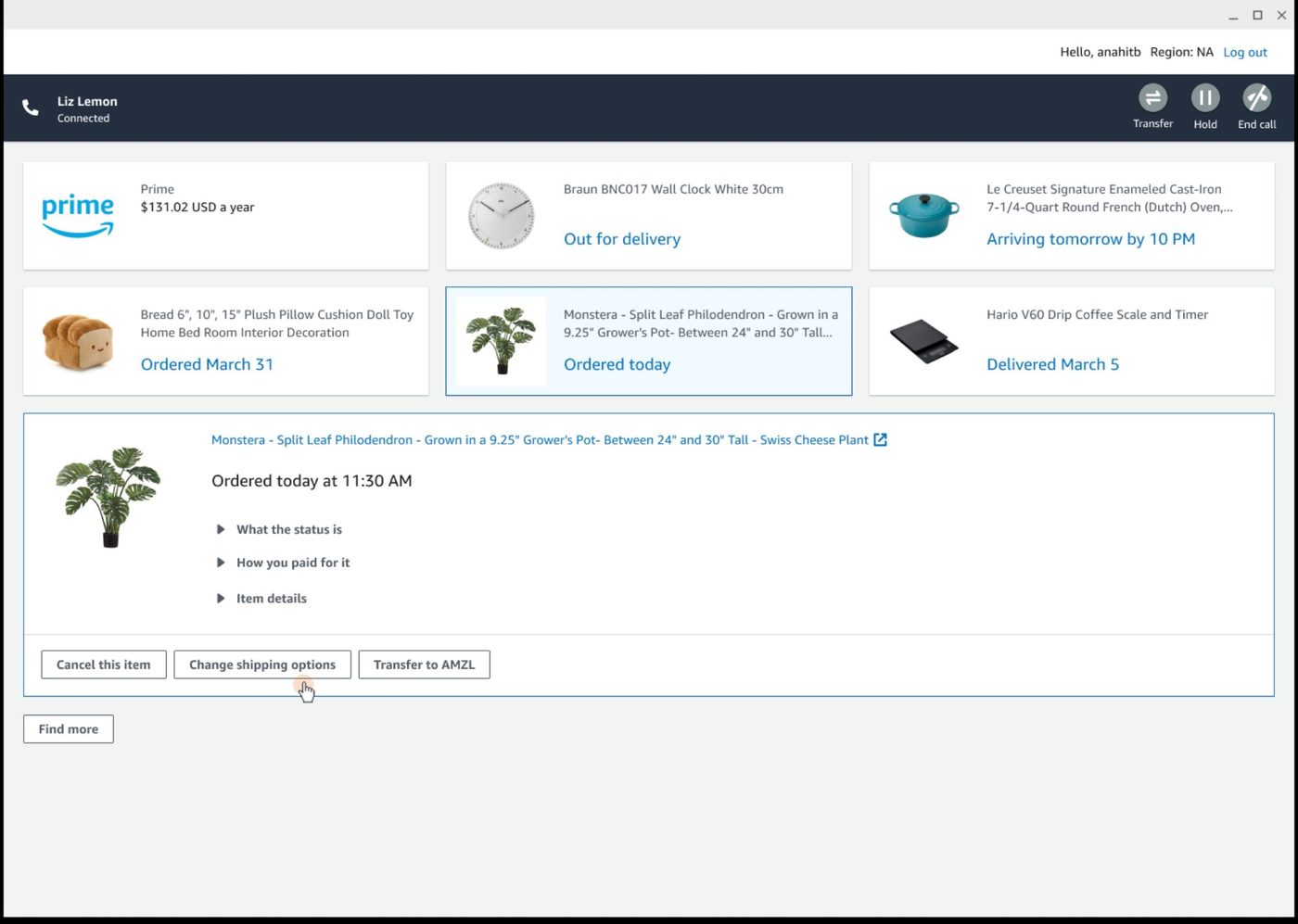

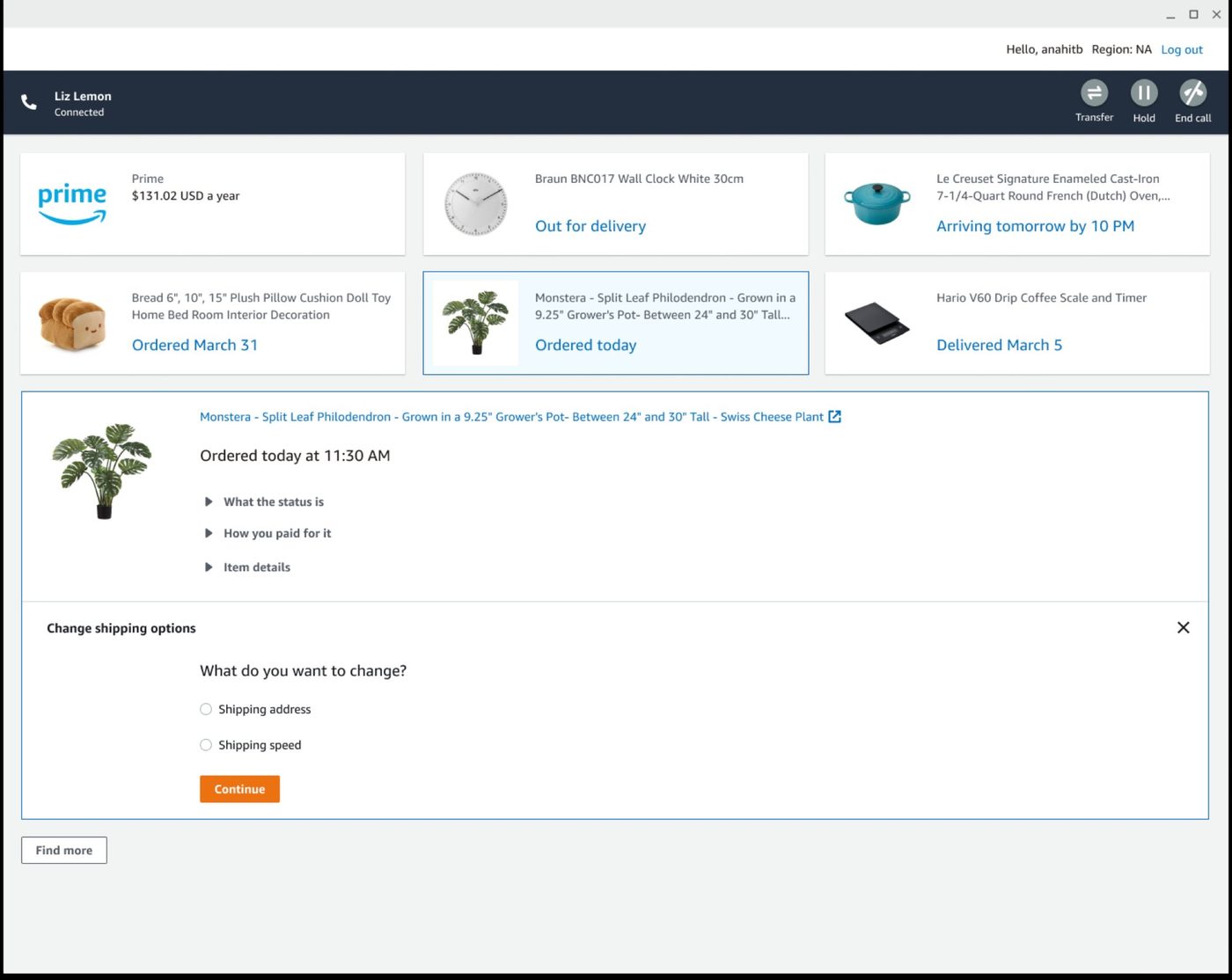

Amazon's automated chat bot was handling a growing volume of contacts without a human involved. But bots have limits, ambiguous situations, edge cases, anything that requires real judgment. When the bot hit those limits, the only option was to hand the contact off entirely to a live associate, starting over. The customer experienced it as a disruption. The efficiency gains evaporated.

When the bot reached a decision requiring human judgment, it surfaced an approval request directly in the chat thread. Simple to build — but the approval looked like just another chat message.

For a large category of contacts, Amazon already had all the information needed to resolve them before the associate even picked up. The item was missing. Amazon knew what it was, when it shipped, where it was delivered, and what the policy said. But the associate still had to navigate through a manual series of questions to reach a resolution that was already predictable. Some customers had even learned which answers produced the outcome they wanted, which generated inaccurate data and made it harder for Amazon to understand and fix the real underlying problems.

This was the baseline. For every contact — regardless of how predictable the outcome — associates navigated the same step-by-step sequence. Amazon already had the data to predict the resolution. The workflow made no use of it.

The first direction: let AI pre-fill the match and solve cards with its best prediction so associates could skip the navigation. It proved the AI could predict correctly. But the presentation created a trust problem we did not anticipate.

Instead of pre-filling the form, we built a purpose-built AI widget at the top of the workspace. It showed the suggested resolution alongside the supporting evidence. Associates verified, not navigated.

Built once. Available to everyone.

With AI experiments running across retail, devices, digital, shipping, and Amazon Business simultaneously, there was a real risk of fragmentation, the same problem solved five different ways, five separate times, none of them able to learn from the others. Because my team owned the UX framework, we were in a position to prevent that. Any design pattern that proved out in one business unit could be evaluated for whether it belonged in the shared platform, available to every team, not just the one that built it first. I set up a structured cross-team review process across four organizations. Designers from each vertical brought their work. We identified what was genuinely reusable, refined it, and graduated it into the framework. The result: teams didn't have to reinvent. They could onboard onto proven patterns and focus on what was unique to their context.

Results that moved roadmaps.

Every initiative here was measured against real contacts with real associates, not simulated scenarios or internal demos. The results were strong enough that leadership didn't just note them. They restructured roadmaps around them.

See it in context.

Two interactive prototypes built to the level of fidelity we used for associate testing. Each one walks through a contact type that happens thousands of times a day at Amazon.